To Cynthia

human was the music,

natural was the static…

—JOHN UPDIKE

Prologue

The Butterfly Effect

Edward Lorenz and his toy weather. The computer misbehaves. Long-range forecasting is doomed. Order masquerading as randomness. A world of nonlinearity. “We completely missed the point.”

Revolution

A revolution in seeing. Pendulum clocks, space balls, and playground swings. The invention of the horseshoe. A mystery solved: Jupiter’s Great Red Spot.

Life’s Ups and Downs

Modeling wildlife populations. Nonlinear science, “the study of non-elephant animals.” Pitchfork bifurcations and a ride on the Spree. A movie of chaos and a messianic appeal.

A Geometry of Nature

A discovery about cotton prices. A refugee from Bourbaki. Transmission errors and jagged shores. New dimensions. The monsters of fractal geometry. Quakes in the schizosphere. From clouds to blood vessels. The trash cans of science. “To see the world in a grain of sand.”

Strange Attractors

A problem for God. Transitions in the laboratory. Rotating cylinders and a turning point. David Ruelle’s idea for turbulence. Loops in phase space. Mille-feuilles and sausage. An astronomer’s mapping. “Fireworks or galaxies.”

Universality

A new start at Los Alamos. The renormalization group. Decoding color. The rise of numerical experimentation. Mitchell Feigenbaum’s breakthrough. A universal theory. The rejection letters. Meeting in Como. Clouds and paintings.

The Experimenter

Helium in a Small Box. “Insolid billowing of the solid.” Flow and form in nature. Albert Libchaber’s delicate triumph. Experiment joins theory. From one dimension to many.

Images of Chaos

The complex plane. Surprise in Newton’s method. The Mandelbrot set: sprouts and tendrils. Art and commerce meet science. Fractal basin boundaries. The chaos game.

The Dynamical Systems Collective

Santa Cruz and the sixties. The analog computer. Was this science? “A long-range vision.” Measuring unpredictability. Information theory. From microscale to macroscale. The dripping faucet. Audiovisual aids. An era ends.

Inner Rhythms

A misunderstanding about models. The complex body. The dynamical heart. Resetting the biological clock. Fatal arrhythmia. Chick embryos and abnormal beats. Chaos as health.

Chaos and Beyond

New beliefs, new definitions. The Second Law, the snowflake puzzle, and loaded dice. Opportunity and necessity.

Afterword

Notes on Sources and Further Reading

Acknowledgments

Index

THE POLICE IN THE SMALL TOWN of Los Alamos, New Mexico, worried briefly in 1974 about a man seen prowling in the dark, night after night, the red glow of his cigarette floating along the back streets. He would pace for hours, heading nowhere in the starlight that hammers down through the thin air of the mesas. The police were not the only ones to wonder. At the national laboratory some physicists had learned that their newest colleague was experimenting with twenty-six–hour days, which meant that his waking schedule would slowly roll in and out of phase with theirs. This bordered on strange, even for the Theoretical Division.

In the three decades since J. Robert Oppenheimer chose this unworldly New Mexico landscape for the atomic bomb project, Los Alamos National Laboratory had spread across an expanse of desolate plateau, bringing particle accelerators and gas lasers and chemical plants, thousands of scientists and administrators and technicians, as well as one of the world’s greatest concentrations of supercomputers. Some of the older scientists remembered the wooden buildings rising hastily out of the rimrock in the 1940s, but to most of the Los Alamos staff, young men and women in college-style corduroys and work shirts, the first bombmakers were just ghosts. The laboratory’s locus of purest thought was the Theoretical Division, known as T division, just as computing was C division and weapons was X division. More than a hundred physicists and mathematicians worked in T division, well paid and free of academic pressures to teach and publish. These scientists had experience with brilliance and with eccentricity. They were hard to surprise.

But Mitchell Feigenbaum was an unusual case. He had exactly one published article to his name, and he was working on nothing that seemed to have any particular promise. His hair was a ragged mane, sweeping back from his wide brow in the style of busts of German composers. His eyes were sudden and passionate. When he spoke, always rapidly, he tended to drop articles and pronouns in a vaguely middle European way, even though he was a native of Brooklyn. When he worked, he worked obsessively. When he could not work, he walked and thought, day or night, and night was best of all. The twenty-four–hour day seemed too constraining. Nevertheless, his experiment in personal quasiperiodicity came to an end when he decided he could no longer bear waking to the setting sun, as had to happen every few days.

At the age of twenty-nine he had already become a savant among the savants, an ad hoc consultant whom scientists would go to see about any especially intractable problem, when they could find him. One evening he arrived at work just as the director of the laboratory, Harold Agnew, was leaving. Agnew was a powerful figure, one of the original Oppenheimer apprentices. He had flown over Hiroshima on an instrument plane that accompanied the Enola Gay, photographing the delivery of the laboratory’s first product.

“I understand you’re real smart,” Agnew said to Feigenbaum. “If you’re so smart, why don’t you just solve laser fusion?”

Even Feigenbaum’s friends were wondering whether he was ever going to produce any work of his own. As willing as he was to do impromptu magic with their questions, he did not seem interested in devoting his own research to any problem that might pay off. He thought about turbulence in liquids and gases. He thought about time—did it glide smoothly forward or hop discretely like a sequence of cosmic motion-picture frames? He thought about the eye’s ability to see consistent colors and forms in a universe that physicists knew to be a shifting quantum kaleidoscope. He thought about clouds, watching them from airplane windows (until, in 1975, his scientific travel privileges were officially suspended on grounds of overuse) or from the hiking trails above the laboratory.

In the mountain towns of the West, clouds barely resemble the sooty indeterminate low-flying hazes that fill the Eastern air. At Los Alamos, in the lee of a great volcanic caldera, the clouds spill across the sky, in random formation, yes, but also not-random, standing in uniform spikes or rolling in regularly furrowed patterns like brain matter. On a stormy afternoon, when the sky shimmers and trembles with the electricity to come, the clouds stand out from thirty miles away, filtering the light and reflecting it, until the whole sky starts to seem like a spectacle staged as a subtle reproach to physicists. Clouds represented a side of nature that the mainstream of physics had passed by, a side that was at once, fuzzy and detailed, structured and unpredictable. Feigenbaum thought about such things, quietly and unproductively.

To a physicist, creating laser fusion was a legitimate problem; puzzling out the spin and color and flavor of small particles was a legitimate problem; dating the origin of the universe was a legitimate problem. Understanding clouds was a problem for a meteorologist. Like other physicists, Feigenbaum used an understated, tough-guy vocabulary to rate such problems. Such a thing is obvious, he might say, meaning that a result could be understood by any skilled physicist after appropriate contemplation and calculation. Not obvious described work that commanded respect and Nobel prizes. For the hardest problems, the problems that would not give way without long looks into the universe’s bowels, physicists reserved words like deep. In 1974, though few of his colleagues knew it, Feigenbaum was working on a problem that was deep: chaos.

WHERE CHAOS BEGINS, classical science stops. For as long as the world has had physicists inquiring into the laws of nature, it has suffered a special ignorance about disorder in the atmosphere, in the turbulent sea, in the fluctuations of wildlife populations, in the oscillations of the heart and the brain. The irregular side of nature, the discontinuous and erratic side—these have been puzzles to science, or worse, monstrosities.

But in the 1970s a few scientists in the United States and Europe began to find a way through disorder. They were mathematicians, physicists, biologists, chemists, all seeking connections between different kinds of irregularity. Physiologists found a surprising order in the chaos that develops in the human heart, the prime cause of sudden, unexplained death. Ecologists explored the rise and fall of gypsy moth populations. Economists dug out old stock price data and tried a new kind of analysis. The insights that emerged led directly into the natural world—the shapes of clouds, the paths of lightning, the microscopic intertwining of blood vessels, the galactic clustering of stars.

When Mitchell Feigenbaum began thinking about chaos at Los Alamos, he was one of a handful of scattered scientists, mostly unknown to one another. A mathematician in Berkeley, California, had formed a small group dedicated to creating a new study of “dynamical systems.” A population biologist at Princeton University was about to publish an impassioned plea that all scientists should look at the surprisingly complex behavior lurking in some simple models. A geometer working for IBM was looking for a new word to describe a family of shapes—jagged, tangled, splintered, twisted, fractured—that he considered an organizing principle in nature. A French mathematical physicist had just made the disputatious claim that turbulence in fluids might have something to do with a bizarre, infinitely tangled abstraction that he called a strange attractor.

A decade later, chaos has become a shorthand name for a fast-growing movement that is reshaping the fabric of the scientific establishment. Chaos conferences and chaos journals abound. Government program managers in charge of research money for the military, the Central Intelligence Agency, and the Department of Energy have put ever greater sums into chaos research and set up special bureaucracies to handle the financing. At every major university and every major corporate research center, some theorists ally themselves first with chaos and only second with their nominal specialties. At Los Alamos, a Center for Nonlinear Studies was established to coordinate work on chaos and related problems; similar institutions have appeared on university campuses across the country.

Chaos has created special techniques of using computers and special kinds of graphic images, pictures that capture a fantastic and delicate structure underlying complexity. The new science has spawned its own language, an elegant shop talk of fractals and bifurcations, intermittencies and periodicities, folded-towel diffeomorphisms and smooth noodle maps. These are the new elements of motion, just as, in traditional physics, quarks and gluons are the new elements of matter. To some physicists chaos is a science of process rather than state, of becoming rather than being.

Now that science is looking, chaos seems to be everywhere. A rising column of cigarette smoke breaks into wild swirls. A flag snaps back and forth in the wind. A dripping faucet goes from a steady pattern to a random one. Chaos appears in the behavior of the weather, the behavior of an airplane in flight, the behavior of cars clustering on an expressway, the behavior of oil flowing in underground pipes. No matter what the medium, the behavior obeys the same newly discovered laws. That realization has begun to change the way business executives make decisions about insurance, the way astronomers look at the solar system, the way political theorists talk about the stresses leading to armed conflict.

Chaos breaks across the lines that separate scientific disciplines. Because it is a science of the global nature of systems, it has brought together thinkers from fields that had been widely separated. “Fifteen years ago, science was heading for a crisis of increasing specialization,” a Navy official in charge of scientific financing remarked to an audience of mathematicians, biologists, physicists, and medical doctors. “Dramatically, that specialization has reversed because of chaos.” Chaos poses problems that defy accepted ways of working in science. It makes strong claims about the universal behavior of complexity. The first chaos theorists, the scientists who set the discipline in motion, shared certain sensibilities. They had an eye for pattern, especially pattern that appeared on different scales at the same time. They had a taste for randomness and complexity, for jagged edges and sudden leaps. Believers in chaos—and they sometimes call themselves believers, or converts, or evangelists—speculate about determinism and free will, about evolution, about the nature of conscious intelligence. They feel that they are turning back a trend in science toward reductionism, the analysis of systems in terms of their constituent parts: quarks, chromosomes, or neurons. They believe that they are looking for the whole.

The most passionate advocates of the new science go so far as to say that twentieth-century science will be remembered for just three things: relativity, quantum mechanics, and chaos. Chaos, they contend, has become the century’s third great revolution in the physical sciences. Like the first two revolutions, chaos cuts away at the tenets of Newton’s physics. As one physicist put it: “Relativity eliminated the Newtonian illusion of absolute space and time; quantum theory eliminated the Newtonian dream of a controllable measurement process; and chaos eliminates the Laplacian fantasy of deterministic predictability.” Of the three, the revolution in chaos applies to the universe we see and touch, to objects at human scale. Everyday experience and real pictures of the world have become legitimate targets for inquiry. There has long been a feeling, not always expressed openly, that theoretical physics has strayed far from human intuition about the world. Whether this will prove to be fruitful heresy or just plain heresy, no one knows. But some of those who thought physics might be working its way into a corner now look to chaos as a way out.

Within physics itself, the study of chaos emerged from a backwater. The mainstream for most of the twentieth century has been particle physics, exploring the building blocks of matter at higher and higher energies, smaller and smaller scales, shorter and shorter times. Out of particle physics have come theories about the fundamental forces of nature and about the origin of the universe. Yet some young physicists have grown dissatisfied with the direction of the most prestigious of sciences. Progress has begun to seem slow, the naming of new particles futile, the body of theory cluttered. With the coming of chaos, younger scientists believed they were seeing the beginnings of a course change for all of physics. The field had been dominated long enough, they felt, by the glittering abstractions of high-energy particles and quantum mechanics.

The cosmologist Stephen Hawking, occupant of Newton’s chair at Cambridge University, spoke for most of physics when he took stock of his science in a 1980 lecture titled “Is the End in Sight for Theoretical Physics?”

“We already know the physical laws that govern everything we experience in everyday life…. It is a tribute to how far we have come in theoretical physics that it now takes enormous machines and a great deal of money to perform an experiment whose results we cannot predict.”

Yet Hawking recognized that understanding nature’s laws on the terms of particle physics left unanswered the question of how to apply those laws to any but the simplest of systems. Predictability is one thing in a cloud chamber where two particles collide at the end of a race around an accelerator. It is something else altogether in the simplest tub of roiling fluid, or in the earth’s weather, or in the human brain.

Hawking’s physics, efficiently gathering up Nobel Prizes and big money for experiments, has often been called a revolution. At times it seemed within reach of that grail of science, the Grand Unified Theory or “theory of everything.” Physics had traced the development of energy and matter in all but the first eyeblink of the universe’s history. But was postwar particle physics a revolution? Or was it just the fleshing out of the framework laid down by Einstein, Bohr, and the other fathers of relativity and quantum mechanics? Certainly, the achievements of physics, from the atomic bomb to the transistor, changed the twentieth-century landscape. Yet if anything, the scope of particle physics seemed to have narrowed. Two generations had passed since the field produced a new theoretical idea that changed the way nonspecialists understand the world.

The physics described by Hawking could complete its mission without answering some of the most fundamental questions about nature. How does life begin? What is turbulence? Above all, in a universe ruled by entropy, drawing inexorably toward greater and greater disorder, how does order arise? At the same time, objects of everyday experience like fluids and mechanical systems came to seem so basic and so ordinary that physicists had a natural tendency to assume they were well understood. It was not so.

As the revolution in chaos runs its course, the best physicists find themselves returning without embarrassment to phenomena on a human scale. They study not just galaxies but clouds. They carry out profitable computer research not just on Crays but on Macintoshes. The premier journals print articles on the strange dynamics of a ball bouncing on a table side by side with articles on quantum physics. The simplest systems are now seen to create extraordinarily difficult problems of predictability. Yet order arises spontaneously in those systems—chaos and order together. Only a new kind of science could begin to cross the great gulf between knowledge of what one thing does—one water molecule, one cell of heart tissue, one neuron—and what millions of them do.

Watch two bits of foam flowing side by side at the bottom of a waterfall. What can you guess about how close they were at the top? Nothing. As far as standard physics was concerned, God might just as well have taken all those water molecules under the table and shuffled them personally. Traditionally, when physicists saw complex results, they looked for complex causes. When they saw a random relationship between what goes into a system and what comes out, they assumed that they would have to build randomness into any realistic theory, by artificially adding noise or error. The modern study of chaos began with the creeping realization in the 1960s that quite simple mathematical equations could model systems every bit as violent as a waterfall. Tiny differences in input could quickly become overwhelming differences in output—a phenomenon given the name “sensitive dependence on initial conditions.” In weather, for example, this translates into what is only half-jokingly known as the Butterfly Effect—the notion that a butterfly stirring the air today in Peking can transform storm systems next month in New York.

When the explorers of chaos began to think back on the genealogy of their new science, they found many intellectual trails from the past. But one stood out clearly. For the young physicists and mathematicians leading the revolution, a starting point was the Butterfly Effect.

Physicists like to think that all you have to do is say, these are the conditions, now what happens next?

—RICHARD P. FEYNMAN

THE SUN BEAT DOWN through a sky that had never seen clouds. The winds swept across an earth as smooth as glass. Night never came, and autumn never gave way to winter. It never rained. The simulated weather in Edward Lorenz’s new electronic computer changed slowly but certainly, drifting through a permanent dry midday midseason, as if the world had turned into Camelot, or some particularly bland version of southern California.

Outside his window Lorenz could watch real weather, the early-morning fog creeping along the Massachusetts Institute of Technology campus or the low clouds slipping over the rooftops from the Atlantic. Fog and clouds never arose in the model running on his computer. The machine, a Royal McBee, was a thicket of wiring and vacuum tubes that occupied an ungainly portion of Lorenz’s office, made a surprising and irritating noise, and broke down every week or so. It had neither the speed nor the memory to manage a realistic simulation of the earth’s atmosphere and oceans. Yet Lorenz created a toy weather in 1960 that succeeded in mesmerizing his colleagues. Every minute the machine marked the passing of a day by printing a row of numbers across a page. If you knew how to read the printouts, you would see a prevailing westerly wind swing now to the north, now to the south, now back to the north. Digitized cyclones spun slowly around an idealized globe. As word spread through the department, the other meteorologists would gather around with the graduate students, making bets on what Lorenz’s weather would do next. Somehow, nothing ever happened the same way twice.

Lorenz enjoyed weather—by no means a prerequisite for a research meteorologist. He savored its changeability. He appreciated the patterns that come and go in the atmosphere, families of eddies and cyclones, always obeying mathematical rules, yet never repeating themselves. When he looked at clouds, he thought he saw a kind of structure in them. Once he had feared that studying the science of weather would be like prying a jack-in–the-box apart with a screwdriver. Now he wondered whether science would be able to penetrate the magic at all. Weather had a flavor that could not be expressed by talking about averages. The daily high temperature in Cambridge, Massachusetts, averages 75 degrees in June. The number of rainy days in Riyadh, Saudi Arabia, averages ten a year. Those were statistics. The essence was the way patterns in the atmosphere changed over time, and that was what Lorenz captured on the Royal McBee.

He was the god of this machine universe, free to choose the laws of nature as he pleased. After a certain amount of undivine trial and error, he chose twelve. They were numerical rules—equations that expressed the relationships between temperature and pressure, between pressure and wind speed. Lorenz understood that he was putting into practice the laws of Newton, appropriate tools for a clockmaker deity who could create a world and set it running for eternity. Thanks to the determinism of physical law, further intervention would then be unnecessary. Those who made such models took for granted that, from present to future, the laws of motion provide a bridge of mathematical certainty. Understand the laws and you understand the universe. That was the philosophy behind modeling weather on a computer.

Indeed, if the eighteenth-century philosophers imagined their creator as a benevolent noninterventionist, content to remain behind the scenes, they might have imagined someone like Lorenz. He was an odd sort of meteorologist. He had the worn face of a Yankee farmer, with surprising bright eyes that made him seem to be laughing whether he was or not. He seldom spoke about himself or his work, but he listened. He often lost himself in a realm of calculation or dreaming that his colleagues found inaccessible. His closest friends felt that Lorenz spent a good deal of his time off in a remote outer space.

As a boy he had been a weather bug, at least to the extent of keeping close tabs on the max-min thermometer recording the days’ highs and lows outside his parents’ house in West Hartford, Connecticut. But he spent more time inside playing with mathematical puzzle books than watching the thermometer. Sometimes he and his father would work out puzzles together. Once they came upon a particularly difficult problem that turned out to be insoluble. That was acceptable, his father told him: you can always try to solve a problem by proving that no solution exists. Lorenz liked that, as he always liked the purity of mathematics, and when he graduated from Dartmouth College, in 1938, he thought that mathematics was his calling. Circumstance interfered, however, in the form of World War II, which put him to work as a weather forecaster for the Army Air Corps. After the war Lorenz decided to stay with meteorology, investigating the theory of it, pushing the mathematics a little further forward. He made a name for himself by publishing work on orthodox problems, such as the general circulation of the atmosphere. And in the meantime he continued to think about forecasting.

To most serious meteorologists, forecasting was less than science. It was a seat-of–the-pants business performed by technicians who needed some intuitive ability to read the next day’s weather in the instruments and the clouds. It was guesswork. At centers like M.I.T., meteorology favored problems that had solutions. Lorenz understood the messiness of weather prediction as well as anyone, having tried it firsthand for the benefit of military pilots, but he harbored an interest in the problem—a mathematical interest.

Not only did meteorologists scorn forecasting, but in the 1960s virtually all serious scientists mistrusted computers. These souped-up calculators hardly seemed like tools for theoretical science. So numerical weather modeling was something of a bastard problem. Yet the time was right for it. Weather forecasting had been waiting two centuries for a machine that could repeat thousands of calculations over and over again by brute force. Only a computer could cash in the Newtonian promise that the world unfolded along a deterministic path, rule-bound like the planets, predictable like eclipses and tides. In theory a computer could let meteorologists do what astronomers had been able to do with pencil and slide rule: reckon the future of their universe from its initial conditions and the physical laws that guide its evolution. The equations describing the motion of air and water were as well known as those describing the motion of planets. Astronomers did not achieve perfection and never would, not in a solar system tugged by the gravities of nine planets, scores of moons and thousands of asteroids, but calculations of planetary motion were so accurate that people forgot they were forecasts. When an astronomer said, “Comet Halley will be back this way in seventy-six years,” it seemed like fact, not prophecy. Deterministic numerical forecasting figured accurate courses for spacecraft and missiles. Why not winds and clouds?

Weather was vastly more complicated, but it was governed by the same laws. Perhaps a powerful enough computer could be the supreme intelligence imagined by Laplace, the eighteenth-century philosopher-mathematician who caught the Newtonian fever like no one else: “Such an intelligence,” Laplace wrote, “would embrace in the same formula the movements of the greatest bodies of the universe and those of the lightest atom; for it, nothing would be uncertain and the future, as the past, would be present to its eyes.” In these days of Einstein’s relativity and Heisenberg’s uncertainty, Laplace seems almost buffoon-like in his optimism, but much of modern science has pursued his dream. Implicitly, the mission of many twentieth-century scientists—biologists, neurologists, economists—has been to break their universes down into the simplest atoms that will obey scientific rules. In all these sciences, a kind of Newtonian determinism has been brought to bear. The fathers of modern computing always had Laplace in mind, and the history of computing and the history of forecasting were intermingled ever since John von Neumann designed his first machines at the Institute for Advanced Study in Princeton, New Jersey, in the 1950s. Von Neumann recognized that weather modeling could be an ideal task for a computer.

There was always one small compromise, so small that working scientists usually forgot it was there, lurking in a corner of their philosophies like an unpaid bill. Measurements could never be perfect. Scientists marching under Newton’s banner actually waved another flag that said something like this: Given an approximate knowledge of a system’s initial conditions and an understanding of natural law, one can calculate the approximate behavior of the system. This assumption lay at the philosophical heart of science. As one theoretician liked to tell his students: “The basic idea of Western science is that you don’t have to take into account the falling of a leaf on some planet in another galaxy when you’re trying to account for the motion of a billiard ball on a pool table on earth. Very small influences can be neglected. There’s a convergence in the way things work, and arbitrarily small influences don’t blow up to have arbitrarily large effects.” Classically, the belief in approximation and convergence was well justified. It worked. A tiny error in fixing the position of Comet Halley in 1910 would only cause a tiny error in predicting its arrival in 1986, and the error would stay small for millions of years to come. Computers rely on the same assumption in guiding spacecraft: approximately accurate input gives approximately accurate output. Economic forecasters rely on this assumption, though their success is less apparent. So did the pioneers in global weather forecasting.

With his primitive computer, Lorenz had boiled weather down to the barest skeleton. Yet, line by line, the winds and temperatures in Lorenz’s printouts seemed to behave in a recognizable earthly way. They matched his cherished intuition about the weather, his sense that it repeated itself, displaying familiar patterns over time, pressure rising and falling, the airstream swinging north and south. He discovered that when a line went from high to low without a bump, a double bump would come next, and he said, “That’s the kind of rule a forecaster could use.” But the repetitions were never quite exact. There was pattern, with disturbances. An orderly disorder.

To make the patterns plain to see, Lorenz created a primitive kind of graphics. Instead of just printing out the usual lines of digits, he would have the machine print a certain number of blank spaces followed by the letter a. He would pick one variable—perhaps the direction of the airstream. Gradually the a’s marched down the roll of paper, swinging back and forth in a wavy line, making a long series of hills and valleys that represented the way the west wind would swing north and south across the continent. The orderliness of it, the recognizable cycles coming around again and again but never twice the same way, had a hypnotic fascination. The system seemed slowly to be revealing its secrets to the forecaster’s eye.

One day in the winter of 1961, wanting to examine one sequence at greater length, Lorenz took a shortcut. Instead of starting the whole run over, he started midway through. To give the machine its initial conditions, he typed the numbers straight from the earlier printout. Then he walked down the hall to get away from the noise and drink a cup of coffee. When he returned an hour later, he saw something unexpected, something that planted a seed for a new science.

THIS NEW RUN should have exactly duplicated the old. Lorenz had copied the numbers into the machine himself. The program had not changed. Yet as he stared at the new printout, Lorenz saw his weather diverging so rapidly from the pattern of the last run that, within just a few months, all resemblance had disappeared. He looked at one set of numbers, then back at the other. He might as well have chosen two random weathers out of a hat. His first thought was that another vacuum tube had gone bad.

Suddenly he realized the truth. There had been no malfunction. The problem lay in the numbers he had typed. In the computer’s memory, six decimal places were stored: .506127. On the printout, to save space, just three appeared: .506. Lorenz had entered the shorter, rounded-off numbers, assuming that the difference—one part in a thousand—was inconsequential.

It was a reasonable assumption. If a weather satellite can read ocean-surface temperature to within one part in a thousand, its operators consider themselves lucky. Lorenz’s Royal McBee was implementing the classical program. It used a purely deterministic system of equations. Given a particular starting point, the weather would unfold exactly the same way each time. Given a slightly different starting point, the weather should unfold in a slightly different way. A small numerical error was like a small puff of wind—surely the small puffs faded or canceled each other out before they could change important, large-scale features of the weather. Yet in Lorenz’s particular system of equations, small errors proved catastrophic.

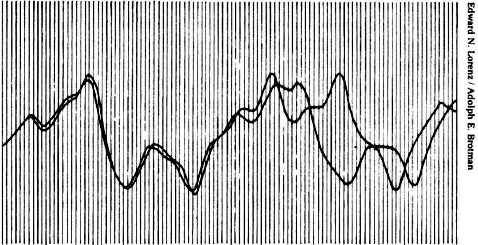

HOW TWO WEATHER PATTERNS DIVERGE. From nearly the same starting point, Edward Lorenz saw his computer weather produce patterns that grew farther and farther apart until all resemblance disappeared. (From Lorenz’s 1961 printouts.)

He decided to look more closely at the way two nearly identical runs of weather flowed apart. He copied one of the wavy lines of output onto a transparency and laid it over the other, to inspect the way it diverged. First, two humps matched detail for detail. Then one line began to lag a hairsbreadth behind. By the time the two runs reached the next hump, they were distinctly out of phase. By the third or fourth hump, all similarity had vanished.

It was only a wobble from a clumsy computer. Lorenz could have assumed something was wrong with his particular machine or his particular model—probably should have assumed. It was not as though he had mixed sodium and chlorine and got gold. But for reasons of mathematical intuition that his colleagues would begin to understand only later, Lorenz felt a jolt: something was philosophically out of joint. The practical import could be staggering. Although his equations were gross parodies of the earth’s weather, he had a faith that they captured the essence of the real atmosphere. That first day, he decided that long-range weather forecasting must be doomed.

“We certainly hadn’t been successful in doing that anyway and now we had an excuse,” he said. “I think one of the reasons people thought it would be possible to forecast so far ahead is that there are real physical phenomena for which one can do an excellent job of forecasting, such as eclipses, where the dynamics of the sun, moon, and earth are fairly complicated, and such as oceanic tides. I never used to think of tide forecasts as prediction at all—I used to think of them as statements of fact—but of course, you are predicting. Tides are actually just as complicated as the atmosphere. Both have periodic components—you can predict that next summer will be warmer than this winter. But with weather we take the attitude that we knew that already. With tides, it’s the predictable part that we’re interested in, and the unpredictable part is small, unless there’s a storm.

“The average person, seeing that we can predict tides pretty well a few months ahead would say, why can’t we do the same thing with the atmosphere, it’s just a different fluid system, the laws are about as complicated. But I realized that any physical system that behaved nonperiodically would be unpredictable.”

THE FIFTIES AND SIXTIES were years of unreal optimism about weather forecasting. Newspapers and magazines were filled with hope for weather science, not just for prediction but for modification and control. Two technologies were maturing together, the digital computer and the space satellite. An international program was being prepared to take advantage of them, the Global Atmosphere Research Program. There was an idea that human society would free itself from weather’s turmoil and become its master instead of its victim. Geodesic domes would cover cornfields. Airplanes would seed the clouds. Scientists would learn how to make rain and how to stop it.

The intellectual father of this popular notion was Von Neumann, who built his first computer with the precise intention, among other things, of controlling the weather. He surrounded himself with meteorologists and gave breathtaking talks about his plans to the general physics community. He had a specific mathematical reason for his optimism. He recognized that a complicated dynamical system could have points of instability—critical points where a small push can have large consequences, as with a ball balanced at the top of a hill. With the computer up and running, Von Neumann imagined that scientists would calculate the equations of fluid motion for the next few days. Then a central committee of meteorologists would send up airplanes to lay down smoke screens or seed clouds to push the weather into the desired mode. But Von Neumann had overlooked the possibility of chaos, with instability at every point.

By the 1980s a vast and expensive bureaucracy devoted itself to carrying out Von Neumann’s mission, or at least the prediction part of it. America’s premier forecasters operated out of an unadorned cube of a building in suburban Maryland, near the Washington beltway, with a spy’s nest of radar and radio antennas on the roof. Their supercomputer ran a model that resembled Lorenz’s only in its fundamental spirit. Where the Royal McBee could carry out sixty multiplications each second, the speed of a Control Data Cyber 205 was measured in megaflops, millions of floating-point operations per second. Where Lorenz had been happy with twelve equations, the modern global model calculated systems of 500,000 equations. The model understood the way moisture moved heat in and out of the air when it condensed and evaporated. The digital winds were shaped by digital mountain ranges. Data poured in hourly from every nation on the globe, from airplanes, satellites, and ships. The National Meteorological Center produced the world’s second best forecasts.

The best came out of Reading, England, a small college town an hour’s drive from London. The European Centre for Medium Range Weather Forecasts occupied a modest tree-shaded building in a generic United Nations style, modern brick-and–glass architecture, decorated with gifts from many lands. It was built in the heyday of the all-European Common Market spirit, when most of the nations of western Europe decided to pool their talent and resources in the cause of weather prediction. The Europeans attributed their success to their young, rotating staff—no civil service—and their Cray supercomputer, which always seemed to be one model ahead of the American counterpart.

Weather forecasting was the beginning but hardly the end of the business of using computers to model complex systems. The same techniques served many kinds of physical scientists and social scientists hoping to make predictions about everything from the small-scale fluid flows that concerned propeller designers to the vast financial flows that concerned economists. Indeed, by the seventies and eighties, economic forecasting by computer bore a real resemblance to global weather forecasting. The models would churn through complicated, somewhat arbitrary webs of equations, meant to turn measurements of initial conditions—atmospheric pressure or money supply—into a simulation of future trends. The programmers hoped the results were not too grossly distorted by the many unavoidable simplifying assumptions. If a model did anything too obviously bizarre—flooded the Sahara or tripled interest rates—the programmers would revise the equations to bring the output back in line with expectation. In practice, econometric models proved dismally blind to what the future would bring, but many people who should have known better acted as though they believed in the results. Forecasts of economic growth or unemployment were put forward with an implied precision of two or three decimal places. Governments and financial institutions paid for such predictions and acted on them, perhaps out of necessity or for want of anything better. Presumably they knew that such variables as “consumer optimism” were not as nicely measurable as “humidity” and that the perfect differential equations had not yet been written for the movement of politics and fashion. But few realized how fragile was the very process of modeling flows on computers, even when the data was reasonably trustworthy and the laws were purely physical, as in weather forecasting.

Computer modeling had indeed succeeded in changing the weather business from an art to a science. The European Centre’s assessments suggested that the world saved billions of dollars each year from predictions that were statistically better than nothing. But beyond two or three days the world’s best forecasts were speculative, and beyond six or seven they were worthless.

The Butterfly Effect was the reason. For small pieces of weather—and to a global forecaster, small can mean thunderstorms and blizzards—any prediction deteriorates rapidly. Errors and uncertainties multiply, cascading upward through a chain of turbulent features, from dust devils and squalls up to continent-size eddies that only satellites can see.

The modern weather models work with a grid of points on the order of sixty miles apart, and even so, some starting data has to be guessed, since ground stations and satellites cannot see everywhere. But suppose the earth could be covered with sensors spaced one foot apart, rising at one-foot intervals all the way to the top of the atmosphere. Suppose every sensor gives perfectly accurate readings of temperature, pressure, humidity, and any other quantity a meteorologist would want. Precisely at noon an infinitely powerful computer takes all the data and calculates what will happen at each point at 12:01, then 12:02, then 12:03…

The computer will still be unable to predict whether Princeton, New Jersey, will have sun or rain on a day one month away. At noon the spaces between the sensors will hide fluctuations that the computer will not know about, tiny deviations from the average. By 12:01, those fluctuations will already have created small errors one foot away. Soon the errors will have multiplied to the ten-foot scale, and so on up to the size of the globe.

Even for experienced meteorologists, all this runs against intuition. One of Lorenz’s oldest friends was Robert White, a fellow meteorologist at M.I.T. who later became head of the National Oceanic and Atmospheric Administration. Lorenz told him about the Butterfly Effect and what he felt it meant for long-range prediction. White gave Von Neumann’s answer. “Prediction, nothing,” he said. “This is weather control.” His thought was that small modifications, well within human capability, could cause desired large-scale changes.

Lorenz saw it differently. Yes, you could change the weather. You could make it do something different from what it would otherwise have done. But if you did, then you would never know what it would otherwise have done. It would be like giving an extra shuffle to an already well-shuffled pack of cards. You know it will change your luck, but you don’t know whether for better or worse.

LORENZ’S DISCOVERY WAS AN ACCIDENT, one more in a line stretching back to Archimedes and his bathtub. Lorenz never was the type to shout Eureka. Serendipity merely led him to a place he had been all along. He was ready to explore the consequences of his discovery by working out what it must mean for the way science understood flows in all kinds of fluids.

Had he stopped with the Butterfly Effect, an image of predictability giving way to pure randomness, then Lorenz would have produced no more than a piece of very bad news. But Lorenz saw more than randomness embedded in his weather model. He saw a fine geometrical structure, order masquerading as randomness. He was a mathematician in meteorologist’s clothing, after all, and now he began to lead a double life. He would write papers that were pure meteorology. But he would also write papers that were pure mathematics, with a slightly misleading dose of weather talk as preface. Eventually the prefaces would disappear altogether.

He turned his attention more and more to the mathematics of systems that never found a steady state, systems that almost repeated themselves but never quite succeeded. Everyone knew that the weather was such a system—aperiodic. Nature is full of others: animal populations that rise and fall almost regularly, epidemics that come and go on tantalizingly near-regular schedules. If the weather ever did reach a state exactly like one it had reached before, every gust and cloud the same, then presumably it would repeat itself forever after and the problem of forecasting would become trivial.

Lorenz saw that there must be a link between the unwillingness of the weather to repeat itself and the inability of forecasters to predict it—a link between aperiodicity and unpredictability. It was not easy to find simple equations that would produce the aperiodicity he was seeking. At first his computer tended to lock into repetitive cycles. But Lorenz tried different sorts of minor complications, and he finally succeeded when he put in an equation that varied the amount of heating from east to west, corresponding to the real-world variation between the way the sun warms the east coast of North America, for example, and the way it warms the Atlantic Ocean. The repetition disappeared.

The Butterfly Effect was no accident; it was necessary. Suppose small perturbations remained small, he reasoned, instead of cascading upward through the system. Then when the weather came arbitrarily close to a state it had passed through before, it would stay arbitrarily close to the patterns that followed. For practical purposes, the cycles would be predictable—and eventually uninteresting. To produce the rich repertoire of real earthly weather, the beautiful multiplicity of it, you could hardly wish for anything better than a Butterfly Effect.

The Butterfly Effect acquired a technical name: sensitive dependence on initial conditions. And sensitive dependence on initial conditions was not an altogether new notion. It had a place in folklore:

“For want of a nail, the shoe was lost;

For want of a shoe, the horse was lost;

For want of a horse, the rider was lost;

For want of a rider, the battle was lost;

For want of a battle, the kingdom was lost!”

In science as in life, it is well known that a chain of events can have a point of crisis that could magnify small changes. But chaos meant that such points were everywhere. They were pervasive. In systems like the weather, sensitive dependence on initial conditions was an inescapable consequence of the way small scales intertwined with large.

His colleagues were astonished that Lorenz had mimicked both aperiodicity and sensitive dependence on initial conditions in his toy version of the weather: twelve equations, calculated over and over again with ruthless mechanical efficiency. How could such richness, such unpredictability—such chaos—arise from a simple deterministic system?

LORENZ PUT THE WEATHER ASIDE and looked for even simpler ways to produce this complex behavior. He found one in a system of just three equations. They were nonlinear, meaning that they expressed relationships that were not strictly proportional. Linear relationships can be captured with a straight line on a graph. Linear relationships are easy to think about: the more the merrier. Linear equations are solvable, which makes them suitable for textbooks. Linear systems have an important modular virtue: you can take them apart, and put them together again—the pieces add up.

Nonlinear systems generally cannot be solved and cannot be The character of the equation